@ShahidNShah

Existing rules for deploying AI in clinical settings, such as the standards for FDA clearance in the US or a CE mark in Europe, focus primarily on accuracy. There are no explicit requirements that an AI must improve the outcome for patients, largely because such trials have not yet run. But that needs to change, says Emma Beede, a UX researcher at Google Health: “We have to understand how AI tools are going to work for people in context—especially in health care—before they’re widely deployed.”

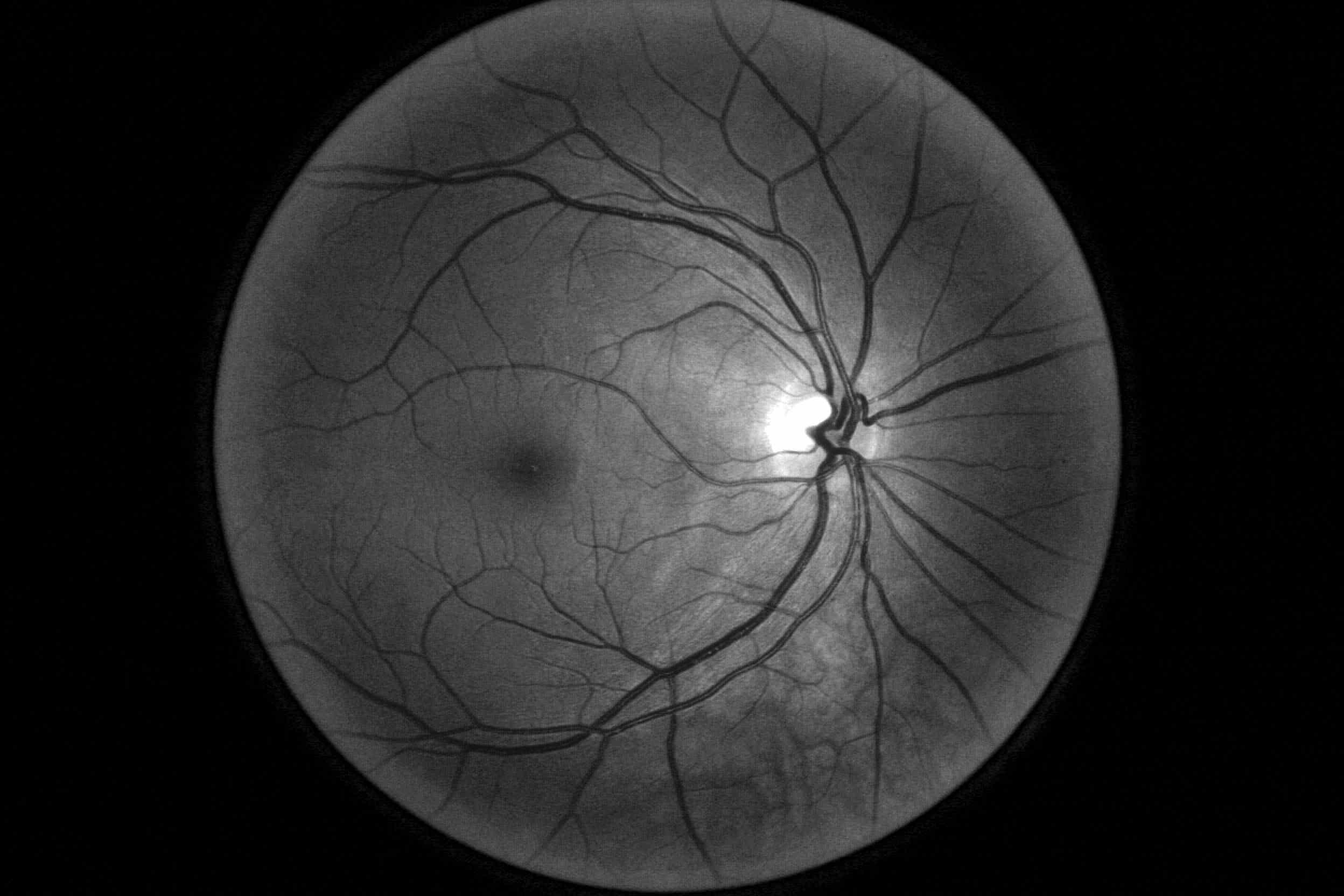

When it worked well, the AI did speed things up. But it sometimes failed to give a result at all. Like most image recognition systems, the deep-learning model had been trained on high-quality scans; to ensure accuracy, it was designed to reject images that fell below a certain threshold of quality.

Continue reading at technologyreview.com

Innovation in medicine can range from improving an existing intervention to introducing an innovation in one’s own clinical practice for the first time, to using an existing intervention in a novel …

Connecting innovation decision makers to authoritative information, institutions, people and insights.

Medigy accurately delivers healthcare and technology information, news and insight from around the world.

Medigy surfaces the world's best crowdsourced health tech offerings with social interactions and peer reviews.

© 2026 Netspective Foundation, Inc. All Rights Reserved.

Built on Jun 4, 2026 at 3:36pm